How to enable case insensitive search in AEM with Lucene?

This tutorial explains how to enable case insensitive search in AEM with Lucene.

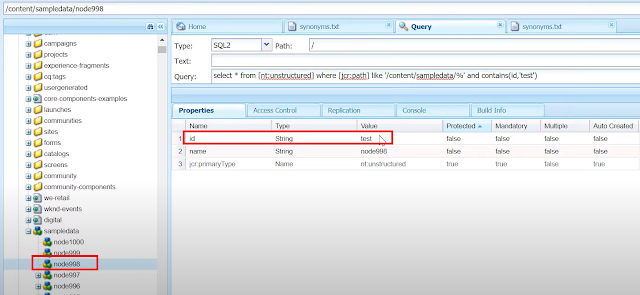

I have two nodes with property "id" under /content/sampledata with same value in different case e.g TEST and test

By default, the Lucene search is case sensitive so the query will return only the matching node - the node matching with value "test"

The case insensitive can be enabled through analyzers in Lucene index

Refer the following URL to configure the custom Lucene index - https://www.albinsblog.com/2020/04/oak-lucene-index-improve-query-in-aem-configure-lucene-index.html#.Xu7oD2hKjb1

Analysis, in Lucene, is the process of converting field text into its most fundamental indexed representation, terms. These terms are used to determine what documents match a query during searching.

I have two nodes with property "id" under /content/sampledata with same value in different case e.g TEST and test

By default, the Lucene search is case sensitive so the query will return only the matching node - the node matching with value "test"

The case insensitive can be enabled through analyzers in Lucene index

Refer the following URL to configure the custom Lucene index - https://www.albinsblog.com/2020/04/oak-lucene-index-improve-query-in-aem-configure-lucene-index.html#.Xu7oD2hKjb1

Analysis, in Lucene, is the process of converting field text into its most fundamental indexed representation, terms. These terms are used to determine what documents match a query during searching.

An analyzer tokenizes text by performing any number of operations on it, which could include extracting words, discarding punctuation, removing accents from characters, lowercasing (also called normalizing), removing common words, reducing words to a root form (stemming), or changing words into the basic form (lemmatization).

In Lucene, an analyzer is a java class that implements a specific analysis. Analysis occurs any time text needs to be converted into terms, which in Lucene’s core is at two spots: during indexing and when searching.

An analyzer chain starts with a Tokenizer, to produce initial tokens from the characters read from a Reader, then modifies the tokens with any number of chained TokenFilters.

Let’s see the some important built-in analyzer available in Lucene bundle:

WhitespaceAnalyzer, as the name implies, splits text into tokens on whitespace characters and makes no other effort to normalize the tokens. It doesn’t lowercase each token.

WhitespaceAnalyzer, as the name implies, splits text into tokens on whitespace characters and makes no other effort to normalize the tokens. It doesn’t lowercase each token.

SimpleAnalyzer first splits tokens at nonletter characters, then lowercases each token. Be careful! This analyzer quietly discards numeric characters but keeps all other characters.

StopAnalyzer is the same as SimpleAnalyzer, except it removes common words. By default, it removes common words specific to the English language (the, a, etc.), though you can pass in your own set.

KeywordAnalyzer treats entire text as a single token.

StandardAnalyzer is Lucene’s most sophisticated core analyzer. It has quite a bit of logic to identify certain kinds of tokens, such as company names, email addresses, and hostnames. It also lowercases each token and removes stop words and punctuation.

The built-in analyzers can be used directly by specifying the class name or the analyzers can be composed with tokenizer and series of filters – there are multiple Tokenizers e.g Standard, Keyword, CharTokenizers and Token Filters e.g Stop, LowerCase,Standard

org.apache.lucene.analysis.standard.StandardAnalyzer

org.apache.lucene.analysis.core.SimpleAnalyzer

There are multiple standard analyzers, tokenizers and Token Filters, custom analyzers/Tokenizer/TokenFilters can be created if required.

The analyzers is configured via the analyzers node (of type nt:unstructured) inside the oak:index definition.

The default analyzer for an index is configured in the default child of the analyzer’s node(of type nt:unstructured).

Create a child node with name "tokenizer" of type "nt:untstrutured" under default node and add the property "name" with value "Standard"

Create a child node with name "filters" of type "nt:untstrutured" under default node.

Create a child node with name "LowerCase" of type "nt:untstrutured"

This will index the data by lower casing and also match data by lower casing during the search.

The configurations are ready now, let us index the data. Change the value of reindex property under the custom index to true - this will initiate the re-indexing, the property value will be changed to false once the re-indexing is initiated

Let me now re-execute the query, this time the query returns both nodes with uppercase and lowercase values(TEST and test)

Sample Configuration - https://github.com/techforum-repo/youttubedata/tree/master/lucene

No comments:

Post a Comment