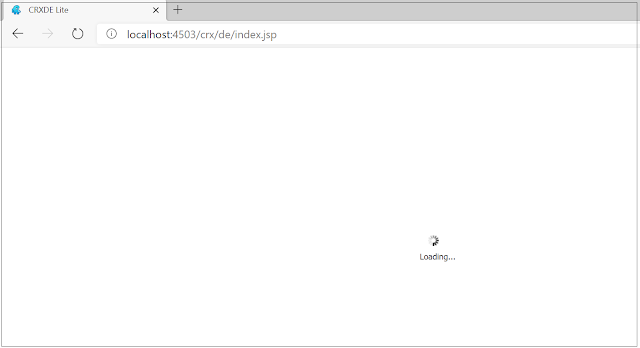

CRXDE and Package manager not accessible in AEM publisher - com.day.crx.delite.impl.servlets.InitServlet Error while retrieving infos

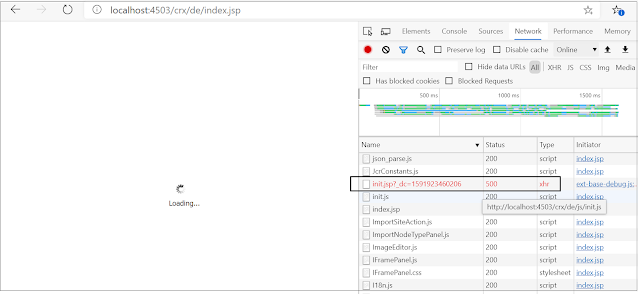

The response to the "http://localhost:4503/crx/de/init.jsp?_dc=1591923460206" service was 500

11.06.2020 19:49:20.558 *ERROR* [qtp1936302775-72] com.day.crx.delite.impl.servlets.InitServlet Error while retrieving infos

java.lang.NullPointerException: null

at com.day.crx.delite.impl.servlets.InitServlet.getFormattedName(InitServlet.java:126) [com.adobe.granite.crxde-lite:1.1.42]

at com.day.crx.delite.impl.servlets.InitServlet.doService(InitServlet.java:84) [com.adobe.granite.crxde-lite:1.1.42]

at com.day.crx.delite.impl.AbstractServlet.service(AbstractServlet.java:52) [com.adobe.granite.crxde-lite:1.1.42]

at com.day.crx.delite.impl.MainServlet.doService(MainServlet.java:132) [com.adobe.granite.crxde-lite:1.1.42]

at com.day.crx.delite.impl.MainServlet.service(MainServlet.java:109) [com.adobe.granite.crxde-lite:1.1.42]

at org.apache.felix.http.base.internal.handler.ServletHandler.handle(ServletHandler.java:123) [org.apache.felix.http.jetty:4.0.8]

at org.apache.felix.http.base.internal.dispatch.InvocationChain.doFilter(InvocationChain.java:86) [org.apache.felix.http.jetty:4.0.8]

at com.adobe.granite.license.impl.LicenseCheckFilter.doFilter(LicenseCheckFilter.java:308) [com.adobe.granite.license:1.2.10]

at org.apache.felix.http.base.internal.handler.FilterHandler.handle(FilterHandler.java:142) [org.apache.felix.http.jetty:4.0.8]

at org.apache.felix.http.base.internal.dispatch.InvocationChain.doFilter(InvocationChain.java:81) [org.apache.felix.http.jetty:4.0.8]

at org.apache.sling.i18n.impl.I18NFilter.doFilter(I18NFilter.java:131) [org.apache.sling.i18n:2.5.14]

at org.apache.felix.http.base.internal.handler.FilterHandler.handle(FilterHandler.java:142) [org.apache.felix.http.jetty:4.0.8]

at org.apache.felix.http.base.internal.dispatch.InvocationChain.doFilter(InvocationChain.java:81) [org.apache.felix.http.jetty:4.0.8]

at org.apache.felix.http.base.internal.dispatch.Dispatcher$1.doFilter(Dispatcher.java:146) [org.apache.felix.http.jetty:4.0.8]

at org.apache.felix.http.base.internal.whiteboard.WhiteboardManager$2.doFilter(WhiteboardManager.java:1002) [org.apache.felix.http.jetty:4.0.8]

at org.apache.sling.security.impl.ReferrerFilter.doFilter(ReferrerFilter.java:326) [org.apache.sling.security:1.1.16]

at org.apache.felix.http.base.internal.handler.PreprocessorHandler.handle(PreprocessorHandler.java:136) [org.apache.felix.http.jetty:4.0.8]

at org.apache.felix.http.base.internal.whiteboard.WhiteboardManager$2.doFilter(WhiteboardManager.java:1008) [org.apache.felix.http.jetty:4.0.8]

at org.apache.felix.http.sslfilter.internal.SslFilter.doFilter(SslFilter.java:97) [org.apache.felix.http.sslfilter:1.2.6]

at org.apache.felix.http.base.internal.handler.PreprocessorHandler.handle(PreprocessorHandler.java:136) [org.apache.felix.http.jetty:4.0.8]

at org.apache.felix.http.base.internal.whiteboard.WhiteboardManager$2.doFilter(WhiteboardManager.java:1008) [org.apache.felix.http.jetty:4.0.8]

at org.apache.felix.http.base.internal.whiteboard.WhiteboardManager.invokePreprocessors(WhiteboardManager.java:1012) [org.apache.felix.http.jetty:4.0.8]

at org.apache.felix.http.base.internal.dispatch.Dispatcher.dispatch(Dispatcher.java:91) [org.apache.felix.http.jetty:4.0.8]

at org.apache.felix.http.base.internal.dispatch.DispatcherServlet.service(DispatcherServlet.java:49) [org.apache.felix.http.jetty:4.0.8]

at javax.servlet.http.HttpServlet.service(HttpServlet.java:725) [org.apache.felix.http.servlet-api:1.1.2]

at org.eclipse.jetty.servlet.ServletHolder.handle(ServletHolder.java:873) [org.apache.felix.http.jetty:4.0.8]

at org.eclipse.jetty.servlet.ServletHandler.doHandle(ServletHandler.java:542) [org.apache.felix.http.jetty:4.0.8]

at org.eclipse.jetty.server.handler.ScopedHandler.nextHandle(ScopedHandler.java:255) [org.apache.felix.http.jetty:4.0.8]

at org.eclipse.jetty.server.session.SessionHandler.doHandle(SessionHandler.java:1701) [org.apache.felix.http.jetty:4.0.8]

at org.eclipse.jetty.server.handler.ScopedHandler.nextHandle(ScopedHandler.java:255) [org.apache.felix.http.jetty:4.0.8]

at org.eclipse.jetty.server.handler.ContextHandler.doHandle(ContextHandler.java:1345) [org.apache.felix.http.jetty:4.0.8]

at org.eclipse.jetty.server.handler.ScopedHandler.nextScope(ScopedHandler.java:203) [org.apache.felix.http.jetty:4.0.8]

at org.eclipse.jetty.servlet.ServletHandler.doScope(ServletHandler.java:480) [org.apache.felix.http.jetty:4.0.8]

at org.eclipse.jetty.server.session.SessionHandler.doScope(SessionHandler.java:1668) [org.apache.felix.http.jetty:4.0.8]

at org.eclipse.jetty.server.handler.ScopedHandler.nextScope(ScopedHandler.java:201) [org.apache.felix.http.jetty:4.0.8]

at org.eclipse.jetty.server.handler.ContextHandler.doScope(ContextHandler.java:1247) [org.apache.felix.http.jetty:4.0.8]

at org.eclipse.jetty.server.handler.ScopedHandler.handle(ScopedHandler.java:144) [org.apache.felix.http.jetty:4.0.8]

at org.eclipse.jetty.server.handler.ContextHandlerCollection.handle(ContextHandlerCollection.java:220) [org.apache.felix.http.jetty:4.0.8]

at org.eclipse.jetty.server.handler.HandlerWrapper.handle(HandlerWrapper.java:132) [org.apache.felix.http.jetty:4.0.8]

at org.eclipse.jetty.server.Server.handle(Server.java:502) [org.apache.felix.http.jetty:4.0.8]

at org.eclipse.jetty.server.HttpChannel.handle(HttpChannel.java:370) [org.apache.felix.http.jetty:4.0.8]

at org.eclipse.jetty.server.HttpConnection.onFillable(HttpConnection.java:267) [org.apache.felix.http.jetty:4.0.8]

at org.eclipse.jetty.io.AbstractConnection$ReadCallback.succeeded(AbstractConnection.java:305) [org.apache.felix.http.jetty:4.0.8]

at org.eclipse.jetty.io.FillInterest.fillable(FillInterest.java:103) [org.apache.felix.http.jetty:4.0.8]

at org.eclipse.jetty.io.ChannelEndPoint$2.run(ChannelEndPoint.java:117) [org.apache.felix.http.jetty:4.0.8]

at org.eclipse.jetty.util.thread.strategy.EatWhatYouKill.runTask(EatWhatYouKill.java:333) [org.apache.felix.http.jetty:4.0.8]

at org.eclipse.jetty.util.thread.strategy.EatWhatYouKill.doProduce(EatWhatYouKill.java:310) [org.apache.felix.http.jetty:4.0.8]

at org.eclipse.jetty.util.thread.strategy.EatWhatYouKill.tryProduce(EatWhatYouKill.java:168) [org.apache.felix.http.jetty:4.0.8]

at org.eclipse.jetty.util.thread.strategy.EatWhatYouKill.run(EatWhatYouKill.java:126) [org.apache.felix.http.jetty:4.0.8]

at org.eclipse.jetty.util.thread.ReservedThreadExecutor$ReservedThread.run(ReservedThreadExecutor.java:366) [org.apache.felix.http.jetty:4.0.8]

at org.eclipse.jetty.util.thread.QueuedThreadPool.runJob(QueuedThreadPool.java:765) [org.apache.felix.http.jetty:4.0.8]

at org.eclipse.jetty.util.thread.QueuedThreadPool$2.run(QueuedThreadPool.java:683) [org.apache.felix.http.jetty:4.0.8]

at java.base/java.lang.Thread.run(Thread.java:834)

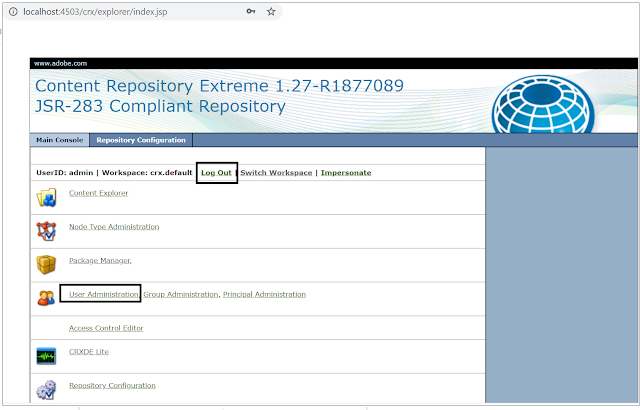

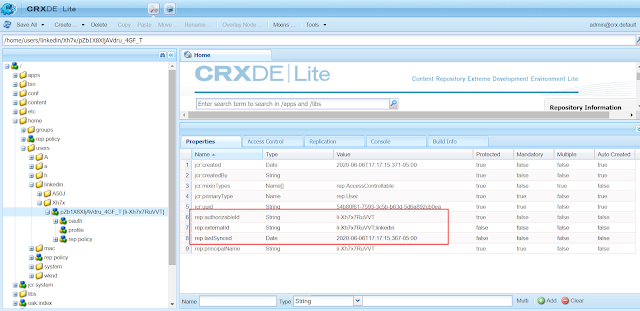

After analysis, identified the issue can happen in one of the below scenarios

- Read access for the anonymous user to read own user node has removed

- Anonymous user deleted by mistake

The actual root cause for our issue was first scenario - Read access for the anonymous user to read own user node has removed, the anonymous user did not have the permission to access own user node - by default AEM enables the read permission to the anonymous user to access its own user node.

The issue was caused after accidentally removing the read access to anonymous user to read own user node.

The issue got resolved after re-enabling the read access for anonymous user to own user node

Another option to resolve the issue is deleting the anonymous user(or deleted accidentally ) and restarting the server - the anonymous user recreated upon restart but unfortunately AEM not enabling the read access for the anonymous user to own user node on recreation - the permission was enabled only during the initial server setup.

The problem should be addressed by enabling the read permission manually.